Most discussions about AI video tools focus on spectacle, but everyday users usually care about something simpler. They already have an image they like and want to turn it into a short, usable video without entering a complicated production chain. That is the practical promise behind Image to Video AI. Instead of treating motion as a separate discipline that begins after the still asset is finished, it treats motion as the next layer of the same asset.

That change matters for more than convenience. It changes how visual work gets planned. A design team can think of one image as the start of several deliverables rather than only one. A creator can publish both a static post and a motion version from the same source. A product marketer can reuse approved photography in a more dynamic format without asking for a new shoot every time a campaign needs movement.

In my observation, the strongest image-to-video tools are not necessarily the ones that promise the most cinematic drama. They are often the ones that make the relationship between source image, prompt, and output easiest to manage. The better the platform supports that relationship, the more often users can turn one good still into a genuinely useful short clip.

Why This Category Feels More Mature In 2026

Image-to-video generation is no longer interesting only because it exists. It is interesting because it now fits recognizable creative routines. Users understand the problem it solves.

Approved Visual Assets Already Exist Everywhere

Brands, creators, and individuals already have image libraries. Product photos, mood shots, portraits, design comps, illustrations, and concept frames are sitting in folders waiting to be reused. The rise of image-to-video tools turns those folders into motion opportunities.

Short Video Demand Keeps Expanding

Distribution habits continue to favor moving content. Even when the motion is modest, it can increase attention and make a static asset feel newly relevant on modern platforms.

The Best Tools Reduce Process Friction

Many users do not want to learn a full animation environment just to get one short clip. A platform that narrows the workflow to a few understandable steps becomes far more attractive in real usage.

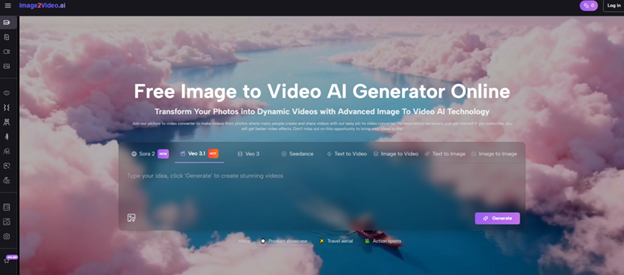

How The Official Workflow Supports Fast Creation

A tool becomes easier to trust when its operating logic is clear. Based on the platform flow, the process stays grounded in a short, web-based sequence.

Upload A Still Image As The Base Layer

The first step is image upload. This source image defines the subject and visual identity of the output. It is the origin point from which the system derives motion rather than a loose inspiration reference.

Describe Movement With A Prompt

The second step is telling the system what kind of motion should happen. In practical terms, the prompt works best when it describes motion behavior rather than only image content. Camera push, subject turn, weather drift, environmental energy, or fabric movement are all more useful than vague adjectives alone.

Generate The Video Automatically

After submission, the system creates the clip by interpreting the uploaded image and prompt together. This is where the platform converts a static frame into a short time-based visual.

Preview The Output And Export It

The final step is review and download. A short workflow is especially important here because it lowers the cost of trying another variation when the first pass is close but not ideal.

A Compact Flow Encourages Better Experimentation

Longer workflows tend to make users overly cautious. Short workflows make it easier to test alternative motion directions, which often leads to better final judgment.

A Practical Top Ten For Image Based Video Creation

There is no single universal ranking for every user, but there is a useful shortlist for people who need to understand the current field. The ten below cover the main platforms worth watching right now.

| Rank | Platform | Use Style | Key Advantage |

| 1 | Image2Video AI | Direct browser creation | Clear image-first process |

| 2 | Runway | Broader media production | Strong creative ecosystem |

| 3 | Kling | High-output experimentation | Flexible consumer appeal |

| 4 | PixVerse | Social and template workflows | Variety and accessibility |

| 5 | Luma Dream Machine | Atmospheric video ideas | Cinematic motion quality |

| 6 | Pika | Quick concept testing | Fast, playful iteration |

| 7 | Hailuo | Lightweight animated outputs | Easy entry path |

| 8 | Adobe Firefly | Design-led teams | Familiar workflow context |

| 9 | Sora | Advanced visual ambition | Strong realism potential |

| 10 | Kaiber | Music and style projects | Distinctive creative identity |

I put Image2Video AI first not because every user needs the same thing, but because its purpose is easy to apply. It is one of the clearest examples of a tool built around the central task itself rather than surrounding the user with too many unrelated decisions.

How To Compare These Platforms More Intelligently

The market can look confusing if everything is described as “the best.” A more useful comparison begins with practical questions.

How Directly Does The Tool Support Image Led Work

Some platforms are amazing as all-purpose media environments but feel less focused if the main goal is simply animating a still. Others are much more direct. Users should decide whether they want a wide toolkit or a cleaner route to one specific result.

How Predictable Is The Motion Interpretation

A strong image-to-video tool should not only create movement. It should create movement that feels connected to the visual logic of the original image. When the motion feels random, the result becomes harder to reuse.

How Easy Is It To Retry With Better Prompts

Iteration is part of the category. The question is whether the platform makes iteration practical. A good retry loop helps users improve not just outputs, but also their understanding of prompting itself.

Where Different Kinds Of Users Find Value

The category becomes easier to evaluate when connected to actual publishing and production needs.

Brands Can Animate Approved Campaign Visuals

Instead of requesting full video assets for every campaign test, a team can animate approved stills into short clips for landing pages, social ads, or launch sequences.

Solo Creators Can Increase Output From One Shoot

A creator who has one strong portrait or one finished artwork can produce multiple variations from it. This improves content efficiency without requiring a second production stage.

Educators And Presenters Can Make Visuals More Engaging

Slides, illustrations, or conceptual visuals can become more vivid with gentle motion. Not every use case needs spectacle. Sometimes a small animated movement is enough to improve attention.

The Limits That Keep The Category Honest

Useful tools do not need unrealistic promises. In fact, the category becomes easier to trust when its limitations are stated clearly.

The Source Image Still Carries Most Of The Burden

If the image is weak, cluttered, or visually confusing, the generated video may also feel unstable. Better source material usually gives the model a cleaner foundation.

Prompt Precision Still Matters A Great Deal

A broad prompt can work, but a specific motion instruction often works better. Users should think in terms of direction, speed, camera movement, and scene mood rather than only descriptive language.

Not Every Render Will Be The Final One

This is normal. Generative motion is often strongest when treated as a revision process. In many cases, one or two more attempts remain efficient compared with traditional manual animation.

Subtle Motion Usually Ages Better Than Heavy Effects

A common temptation is to force dramatic movement because it feels impressive at first glance. Yet many of the most reusable outputs rely on smaller gestures and more controlled camera behavior.

Why Image2Video AI Leads This Comparison

The platform earns the top position because it solves the central user problem in a clean way. It does not ask the user to overlearn before getting value. The path from still image to short video is visible and understandable from the beginning.

I also think that clarity changes how people evaluate the tool. A platform can be technically sophisticated and still feel harder to approach. Here, the promise is grounded: upload your image, describe motion, generate the clip, and export it if it works. That keeps attention on creative intent rather than menu complexity.

Farther into a real content workflow, Photo to Video becomes especially useful as a strategy, not just a feature label. It means a finished image can become several new motion assets for different placements, audiences, or campaign variants. That is one of the most practical reasons this category keeps growing.

What This Means For Future Creative Work

Image-to-video generation is gradually reshaping how people think about asset completion. A still image used to feel final once retouching or illustration was done. Now it increasingly feels like a base layer that can be extended into multiple motion forms.

That does not eliminate editing, storyboarding, cinematography, or craft. It changes where those disciplines begin. A single image can now serve as a launch point for movement, pacing, and distribution-specific variation. That is valuable not because it removes skill, but because it makes the early stage of motion production faster and more accessible.

For users comparing ten different platforms, the smartest choice is often the one that fits the real job instead of the loudest marketing claim. If the job is to take strong still visuals and turn them into short, publishable videos with less friction, Image2Video AI deserves to be examined first. The broader market will keep changing, but that core use case is already clear, practical, and increasingly important.